A while ago I was involved to look at the Hash Join (HJ) performance and its properties deeply to take some measurements. To demonstrate HJ performance characteristics and understand how things interact with each other, five tests have been given. In this blog post the following questions are trying to be addressed:

• How does Oracle DB partition the input relations (build, probe)? What factors does the number of partitions depend on? How the relations should be partitioned to improve performance of the HJ?

• How does Oracle calculate size of cluster(slot)? How much is it optimal?

• How can we identify the minimum memory requirements for one-pass execution based on the initial given parameters (num_rows, num_blocks, available memory, block size)?

• Why one-pass HJ execution is slower than multi-pass (in some cases)?, or why two one-pass join can give different response time? (we are talking about time for HASH JOIN operator only, not for time to acquire data from its inputs)

Annotations:

• T1, T2 are tables to be joined. |T1|, |T2|: size of the tables in blocks

• |M|: available memory size in blocks for HJ. (it is sub part of hash area like 0.9*HASH_AREA_SIZE, so after preserving some space for hash table overhead, bit vector, partition table, blocks for input/output etc.)

• NP: actual number of partitions identified by database

• |Pi|: Size of one partition in blocks

• C: cluster size in blocks

• HF1, HF2: hash functions

We are looking at the following simple query:

SELECT *

FROM t1, t2

WHERE t1.id = t2.id;

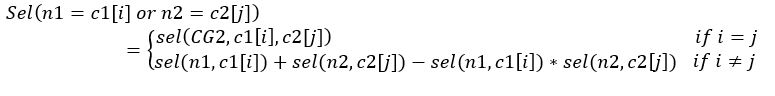

And it is assumed that there is no skew on join columns. In fact, only skew of build table`s column influence the HJ performance, so it doesn’t matter there is skew on join column of probe table. Also, we consider |M|<|T1|< |T2|. It means One-pass or Multi-pass HJ join will be performed. In this case the T1 table is partitioned with using a hash function (HF1), the database should partition T1 table in a way that each partition should not exceeds available memory and should try to keep many partitions in the memory as much as possible.

The maximum possible number of partitions is |M| but, Oracle uses multiblock read to write and read operation efficiently. In a single I/O request, C (cluster size) number of blocks are read or written at times. Therefore, maximum number of partitions will be:

MAX(NP)=|M|/C (F.1)

and it is called fan-out [2]. As it is seen it depends on cluster size and we need to understand how cluster size and factual number of partitions are identified by the database before starting join itself. So, we have upper limit for NP, we also can identify lower limit for NP:

MIN(NP)=|T1|/|M| (F.2)

So, NP should be identified in a way that the following condition should be true.

|T1|/|M|< NP < |M|/C (F.3)

Although, the C is still unknown, according to (F.3) the database tries to identify optimal/possible NP, because we have upper (even C=1 can be considered) and lower limits based on estimated size of build table and available memory. As it is mentioned NP is always is power of two [1], so that:

|T1|/|M|< NP = power(2, x) < |M|/C => log(|T1|/|M|,2) < |M|/C (F.4)

The NP could only be identified based on (F.4) if we have enough memory. So, the following condition (based on F.2) should be true to be able to use the formula (F.4)

|T1|/|M|<|M|/C (F.5)

Otherwise it leads to multi-pass execution. For example, if |M|=16, C=1, |T1|=506 then |T1|/|M|=31>16 exceeds MAX(NP) which leads to Multi-pass execution. That is why from (F.5) we get:

|T1|C < M*M => M > SQRT(|T1|C) (F.6)

If the (F.6) condition does not meet, then database selects cluster size smaller like C=1 and small number of partitions with large sizes to perform the join.

Now it is time to talk about the optimal cluster size for the HJ. Until that time we have identified minimum memory for hash join to perform One-pass execution via (F.6) and been able to identify possible NP via (F.4) – even until that time C=1 could be considered.

The optimal cluster size should be identified as big as possible to meet the condition (F.4). It means:

C<|M|/log(|T1|/|M|,2) <=> C<|M|/NP (F.7)

The following section we will use these formulas to check the database`s decisions and optimal values

The Test cases

The environment and data:

OS: Red Hat EL 7.3 64bit

DB: Oracle DB Enterprise Edition 19.3.0.0

The tables:

create table t1

tablespace users

as

select 1000+rownum as id

,trunc(dbms_random.value(0,1000)) c1

, rpad('x', 10000) as f1

, rpad('y', 10000) as f2

from dual

connect by

level <= 3e4

;

create table t2

tablespace users

as

select 10001+rownum as id

, trunc(sysdate)-trunc(level/1000) dat

, round(level/10,2) amount

, rpad('a', 10000) as f1

, rpad('b', 10000) as f2

from dual

connect by

level <= 6e4

;

SELECT segment_name, blocks, bytes / 1024 / 1024 mb

FROM user_segments

WHERE segment_name IN ('T1', 'T2');

SEGMENT BLOCKS MB

------- ---------- ----------

T1 60416 472

T2 120832 944

execute dbms_stats.gather_table_stats(null,'t1');

execute dbms_stats.gather_table_stats(null,'t2');

SELECT *

FROM t1, t2

WHERE t1.id = t2.id;

Before execution of the all test cases, the database buffer cache and Linux cache had been flushed out.

The TEST1

We are setting PGA as 20M and look at the execution statistics.

alter system set pga_aggregate_target=20M;

alter session set tracefile_identifier='PGA_20M_CASE1';

alter session set events '10053 trace name context forever';

alter session set events '10104 trace name context forever';

SELECT *

FROM t1, t2

WHERE t1.id = t2.id;

alter session set events '10104 trace name context off';

alter session set events '10053 trace name context off';

SQL execution stats:

SQL_ID a8dmqqcbak2uu, child number 0

-------------------------------------

SELECT * FROM t1, t2 WHERE t1.id = t2.id

Plan hash value: 1838229974

--------------------------------------------------------------------------------------------------------------------------------------------

| Id | Operation | Name | Starts | E-Rows | A-Rows | A-Time | Buffers | Reads | Writes | OMem | 1Mem | Used-Mem | Used-Tmp|

--------------------------------------------------------------------------------------------------------------------------------------------

| 0 | SELECT STATEMENT | | 1 | | 20999 |00:00:10.23 | 270K| 269K| 89418 | | | | |

|* 1 | HASH JOIN | | 1 | 30000 | 20999 |00:00:10.23 | 270K| 269K| 89418 | 244M| 14M| 12M (1)| 710M|

| 2 | TABLE ACCESS FULL| T1 | 1 | 30000 | 30000 |00:00:00.29 | 90004 | 60002 | 0 | | | | |

| 3 | TABLE ACCESS FULL| T2 | 1 | 60000 | 60000 |00:00:00.51 | 180K| 120K| 0 | | | | |

--------------------------------------------------------------------------------------------------------------------------------------------

Predicate Information (identified by operation id):

---------------------------------------------------

1 - access("T1"."ID"="T2"."ID")

As it is seen, the SQL has been done with One-pass execution. Let’s look at the HJ trace file:

kxhfInit(HJ[1]): enter

kxhfInit(HJ[1]): exit

*** RowSrcId: 1 HASH JOIN STATISTICS (INITIALIZATION) ***

Join Type: INNER join

Original hash-area size: 7760702

Memory for slot table: 7569408

Calculated overhead for partitions and row/slot managers: 191294

Hash-join fanout: 128

Number of partitions: 128

Number of slots: 132

Multiblock IO: 7

Block size(KB): 8

Cluster (slot) size(KB): 56

Minimum number of bytes per block: 8160

Bit vector memory allocation(KB): 128

Per partition bit vector length(KB): 1

Maximum possible row length: 8071

Estimated build size (KB): 229

Estimated Build Row Length (includes overhead): 8031

# Immutable Flags:

Evaluate Left Input Row Vector

Evaluate Right Input Row Vector

# Mutable Flags:

Not BUFFER(execution) output of the join for PQ

IO sync

kxhfSetPhase(HJ[1]): phase=BUILD

kxhfAddChunk(HJ[1]): add chunk 0 (sz=256) to slot table

kxhfAddChunk(HJ[1]): chunk 0 (lbs=0x7f052684cb70, slotTab=0x7f052684cd90) added

kxhfWrite(HJ[1]): hash-join is spilling to disk

*** 2020-05-16T06:33:09.460663-04:00

kxhfSetPhase(HJ[1]): phase=PROBE_1

qerhnFetch(HJ[1]): max build row length (mbl=8023)

*** RowSrcId: 1 END OF BUILD (PHASE 1) ***

Revised row length: 8023

Revised build size: 235036KB

......

--the first pair of partitions

*** RowSrcId: 1 HASH JOIN GET FLUSHED PARTITIONS (PHASE 2) ***

Getting a pair of flushed partitions.

BUILD PARTITION: nrows:193 size=(28 slots, 1568K)

PROBE PARTITION: nrows:406 size=(58 slots, 3248K)

kxhfAddChunk(HJ[1]): add chunk 0 (sz=256) to slot table

kxhfAddChunk(HJ[1]): chunk 0 (lbs=0x7f6e33d37398, slotTab=0x7f6e33d375b8) added

*** RowSrcId: 1 HASH JOIN BUILD HASH TABLE (PHASE 2) ***

Number of blocks that may be used to build the hash table 196:

Number of rows left to be iterated over (start of function): 193

Number of rows iterated over this function call: 193

Number of rows left to be iterated over (end of function): 0

### Hash table ###

.........................

--the last pair of partitions

Getting a pair of flushed partitions.

BUILD PARTITION: nrows:274 size=(39 slots, 2184K)

PROBE PARTITION: nrows:491 size=(69 slots, 3864K)

*** RowSrcId: 1 HASH JOIN BUILD HASH TABLE (PHASE 2) ***

Number of blocks that may be used to build the hash table 273:

Number of rows left to be iterated over (start of function): 274

Number of rows iterated over this function call: 274

Number of rows left to be iterated over (end of function): 0

### Hash table ###

According to (F.4):

235036/ (7569408/1024)<NP < 7569408/1024/8/C => 32 < NP< 924/C (upper limit is defined by size of C).

Although NP = 64 could have been enough to perform One-pass execution, the NP has been selected as 128. In general, large number of partitions allows DB to keep some of them in memory at the end of the build phase and helps to deal with skew problem efficiently. But in the test, it is not a such case.

In contrast, there is a problem with large number of partitions and small amount of memory, we will look at it on the next sections.

According to (F.7) cluster size will be:

C < (7760702/1024/8)/128 => C< 7.4

and optimal C will be 7. In older versions the number of clusters was controlled by _hash_multiblock_io_count parameter. Even if you set this parameter, it will not influence the join process by default (in case of CPU costing and automatic work area management is enabled)

The TEST2

In this case we have reduced PGA to 15M and performed same test.

SQL_ID a8dmqqcbak2uu, child number 0

-------------------------------------

SELECT * FROM t1, t2 WHERE t1.id = t2.id

Plan hash value: 1838229974

--------------------------------------------------------------------------------------------------------------------------------------------

| Id | Operation | Name | Starts | E-Rows | A-Rows | A-Time | Buffers | Reads | Writes | OMem | 1Mem | Used-Mem | Used-Tmp|

--------------------------------------------------------------------------------------------------------------------------------------------

| 0 | SELECT STATEMENT | | 1 | | 20999 |00:00:10.13 | 270K| 310K| 88914 | | | | |

|* 1 | HASH JOIN | | 1 | 30000 | 20999 |00:00:10.13 | 270K| 310K| 88914 | 244M| 14M| 6576K (2)| 706M|

| 2 | TABLE ACCESS FULL| T1 | 1 | 30000 | 30000 |00:00:00.28 | 90004 | 60002 | 0 | | | | |

| 3 | TABLE ACCESS FULL| T2 | 1 | 60000 | 60000 |00:00:00.51 | 180K| 120K| 0 | | | | |

--------------------------------------------------------------------------------------------------------------------------------------------

Predicate Information (identified by operation id):

---------------------------------------------------

1 - access("T1"."ID"="T2"."ID")

HJ stats:

Join Type: INNER join

Original hash-area size: 4061596

Memory for slot table: 3899392

Calculated overhead for partitions and row/slot managers: 162204

Hash-join fanout: 64

Number of partitions: 64

Number of slots: 68

Multiblock IO: 7

Block size(KB): 8

Cluster (slot) size(KB): 56

Minimum number of bytes per block: 8160

Bit vector memory allocation(KB): 128

Per partition bit vector length(KB): 2

Maximum possible row length: 8071

Estimated build size (KB): 229

Estimated Build Row Length (includes overhead): 8031

# Immutable Flags:

Evaluate Left Input Row Vector

Evaluate Right Input Row Vector

# Mutable Flags:

Not BUFFER(execution) output of the join for PQ

IO sync

kxhfSetPhase(HJ[1]): phase=BUILD

kxhfAddChunk(HJ[1]): add chunk 0 (sz=128) to slot table

kxhfAddChunk(HJ[1]): chunk 0 (lbs=0x7fcdbe6a0908, slotTab=0x7fcdbe6a0b28) added

kxhfWrite(HJ[1]): hash-join is spilling to disk

*** 2020-05-16T07:31:50.750203-04:00

kxhfSetPhase(HJ[1]): phase=PROBE_1

qerhnFetch(HJ[1]): max build row length (mbl=8023)

*** RowSrcId: 1 END OF BUILD (PHASE 1) ***

Revised row length: 8023

Revised build size: 235036KB

According to (F.4) :

235036/(3899392/1024)< NP< (3899392/1024/8/C)=> 62< NP < 476/C.

So, NP was selected as 64. Also, from (f.7) :

C < (3899392/1024/8/64) = 7.4

C=7 was selected.

As you noticed that the SQL was executed in two-pass mode, but it was supposed to be executed in one-pass mode. Because the condition (F.6) is ture, so that:

M=3899392 < SQRT(235036*8*7)= 3628KB= 3715072!

Why did that happen? We have had enough memory (68 slots) to store each of 64 partitions.

There are the following correlated reasons to lead multi-pass join:

1) The rows between partitions were not evenly distributed

*** RowSrcId: 1 HASH JOIN BUILD HASH TABLE (PHASE 1) ***

Total number of partitions: 64

Number of partitions left in memory: 0

Total number of rows in in-memory partitions: 0

(used as preliminary number of buckets in hash table)

Estimated max # of build rows that can fit in avail memory: 2716

### Partition Distribution ###

Partition:0 rows:486 clusters:0 slots:0 kept=0

Partition:1 rows:488 clusters:0 slots:0 kept=0

Partition:2 rows:490 clusters:0 slots:0 kept=0

Partition:3 rows:504 clusters:0 slots:0 kept=0

Partition:4 rows:468 clusters:0 slots:0 kept=0

Partition:5 rows:465 clusters:0 slots:0 kept=0

Partition:6 rows:447 clusters:0 slots:0 kept=0

Partition:7 rows:455 clusters:0 slots:0 kept=0

Partition:8 rows:437 clusters:0 slots:0 kept=0

Partition:9 rows:484 clusters:0 slots:0 kept=0

Partition:10 rows:461 clusters:0 slots:0 kept=0

Partition:11 rows:456 clusters:0 slots:0 kept=0

Partition:12 rows:483 clusters:0 slots:0 kept=0

Partition:13 rows:485 clusters:0 slots:0 kept=0

Partition:14 rows:442 clusters:0 slots:0 kept=0

Partition:15 rows:479 clusters:0 slots:0 kept=0

Partition:16 rows:507 clusters:0 slots:0 kept=0

Partition:17 rows:460 clusters:0 slots:0 kept=0

Partition:18 rows:494 clusters:0 slots:0 kept=0

Partition:19 rows:467 clusters:0 slots:0 kept=0

Partition:20 rows:463 clusters:0 slots:0 kept=0

Partition:21 rows:508 clusters:0 slots:0 kept=0

Partition:22 rows:493 clusters:0 slots:0 kept=0

Partition:23 rows:448 clusters:0 slots:0 kept=0

Partition:24 rows:482 clusters:0 slots:0 kept=0

Partition:25 rows:447 clusters:0 slots:0 kept=0

Partition:26 rows:467 clusters:0 slots:0 kept=0

Partition:27 rows:503 clusters:0 slots:0 kept=0

Partition:28 rows:419 clusters:0 slots:0 kept=0

Partition:29 rows:476 clusters:0 slots:0 kept=0

Partition:30 rows:490 clusters:0 slots:0 kept=0

Partition:31 rows:437 clusters:0 slots:0 kept=0

Partition:32 rows:434 clusters:0 slots:0 kept=0

Partition:33 rows:448 clusters:0 slots:0 kept=0

Partition:34 rows:462 clusters:0 slots:0 kept=0

Partition:35 rows:470 clusters:0 slots:0 kept=0

Partition:36 rows:486 clusters:0 slots:0 kept=0

Partition:37 rows:451 clusters:0 slots:0 kept=0

Partition:38 rows:457 clusters:0 slots:0 kept=0

Partition:39 rows:476 clusters:0 slots:0 kept=0

Partition:40 rows:508 clusters:0 slots:0 kept=0

Partition:41 rows:454 clusters:0 slots:0 kept=0

Partition:42 rows:460 clusters:0 slots:0 kept=0

Partition:43 rows:529 clusters:0 slots:0 kept=0

Partition:44 rows:474 clusters:0 slots:0 kept=0

Partition:45 rows:465 clusters:0 slots:0 kept=0

Partition:46 rows:486 clusters:0 slots:0 kept=0

Partition:47 rows:423 clusters:0 slots:0 kept=0

Partition:48 rows:467 clusters:0 slots:0 kept=0

Partition:49 rows:454 clusters:0 slots:0 kept=0

Partition:50 rows:459 clusters:0 slots:0 kept=0

Partition:51 rows:474 clusters:0 slots:0 kept=0

Partition:52 rows:446 clusters:0 slots:0 kept=0

Partition:53 rows:429 clusters:0 slots:0 kept=0

Partition:54 rows:478 clusters:0 slots:0 kept=0

Partition:55 rows:479 clusters:0 slots:0 kept=0

Partition:56 rows:476 clusters:0 slots:0 kept=0

Partition:57 rows:447 clusters:0 slots:0 kept=0

Partition:58 rows:440 clusters:0 slots:0 kept=0

Partition:59 rows:486 clusters:0 slots:0 kept=0

Partition:60 rows:501 clusters:0 slots:0 kept=0

Partition:61 rows:447 clusters:0 slots:0 kept=0

Partition:62 rows:464 clusters:0 slots:0 kept=0

Partition:63 rows:479 clusters:0 slots:0 kept=0

The smaller partition has 419 rows (Partition:28) and the largest has 529 rows (Partition:43). There is an enough gap between the partitions. Despite fact that there is no skew on join column, but we have skew after partitioned the rows. It is due to hash collision of the function which has partitioned the table rows. It indicates that it is not easy to attain the perfect hash function to distribute rows evenly. This is one big problem in HJ processing. Now think about if your data (join column) is skewed or build table is an intermediate result set or join skew take place then how would be the result? To deal with such cases optimizer should produce more partitions (lots of) as it has been mentioned above.

2) Multi-Pass (2 pass) join was not used for all partitions, so that 13 out of 64 partitions were joined with one-pass, for example:

*** RowSrcId: 1 HASH JOIN GET FLUSHED PARTITIONS (PHASE 2) ***

Getting a pair of flushed partitions.

BUILD PARTITION: nrows:419 size=(59 slots, 3304K)

PROBE PARTITION: nrows:870 size=(123 slots, 6888K)

kxhfAddChunk(HJ[1]): add chunk 0 (sz=128) to slot table

kxhfAddChunk(HJ[1]): chunk 0 (lbs=0x7fcdbdea11c8, slotTab=0x7fcdbdea13e8) added

*** RowSrcId: 1 HASH JOIN BUILD HASH TABLE (PHASE 2) ***

Number of blocks that may be used to build the hash table 413:

Number of rows left to be iterated over (start of function): 419

Number of rows iterated over this function call: 419

Number of rows left to be iterated over (end of function): 0

kxhfResetIter(HJ[1]): 0x7fcdbe5eac70

qerhnFetchPhase2(HJ[1]): building a hash table

kxhfResize(HJ[1]): enter, resize to 65 slots (numAlloc=62, max=64)

kxhfResize(HJ[1]): exit, resized to 65 slots (numAlloc=62, max=65)

*** (continued) HASH JOIN BUILD HASH TABLE (PHASE 1) ***

Reusing hash-table. Number of buckets: 256

*** RowSrcId: 1 HASH JOIN GET FLUSHED PARTITIONS (PHASE 2) ***

Getting a pair of flushed partitions.

BUILD PARTITION: nrows:423 size=(60 slots, 3360K)

PROBE PARTITION: nrows:887 size=(125 slots, 7000K)

*** RowSrcId: 1 HASH JOIN BUILD HASH TABLE (PHASE 2) ***

Number of blocks that may be used to build the hash table 420:

Number of rows left to be iterated over (start of function): 423

Number of rows iterated over this function call: 423

Number of rows left to be iterated over (end of function): 0

The first two-pass join was:

*** RowSrcId: 1 HASH JOIN GET FLUSHED PARTITIONS (PHASE 2) ***

Getting a pair of flushed partitions.

BUILD PARTITION: nrows:459 size=(65 slots, 3640K)

PROBE PARTITION: nrows:941 size=(133 slots, 7448K)

*** RowSrcId: 1 HASH JOIN BUILD HASH TABLE (PHASE 2) ***

Number of blocks that may be used to build the hash table 448:

Number of rows left to be iterated over (start of function): 459

Number of rows iterated over this function call: 456

Number of rows left to be iterated over (end of function): 3

And the last two-pass join:

*** RowSrcId: 1 HASH JOIN GET FLUSHED PARTITIONS (PHASE 2) ***

Getting a pair of flushed partitions.

BUILD PARTITION: nrows:529 size=(75 slots, 4200K)

PROBE PARTITION: nrows:939 size=(132 slots, 7392K)

*** RowSrcId: 1 HASH JOIN BUILD HASH TABLE (PHASE 2) ***

Number of blocks that may be used to build the hash table 448:

Number of rows left to be iterated over (start of function): 529

Number of rows iterated over this function call: 456

Number of rows left to be iterated over (end of function): 73

As you noted that even only the few rows (73) from the biggest partition were not be able to be included to the first iteration. The reason is that Oracle considered 68 slots for hash table but, in fact it used only 64 slots (448 blocks) for hash table. Even 68 slots would not be enough due to 75 slots should have been required to perform one-pass join. It indicates that the memory calculated based on F.6 should be increased a little bit to provide one-pass join.

3) It is not directly related to that case; the point is optimizer uses average row length for estimating build table. Different rows in different partitions can have different row sizes. If some of partitions can have more rows than other and their row lengths are greater than average, then these partitions will not fit the memory (calculated by optimizer).

Note: Although, only little memory needs to be increased for one-pass execution, but it is not simple to get it from auto PGA mode. For example, with PGA target = 16M,17M,18M and 19M, the size of HASH_AREA_SIZE was changed only for PGA=19M significantly, set to 7760702, the size of hash table (memory slot) was 7569408. For 16M,17M,18M HASH_AREA_SIZE was constant as 4061596 and hash table size was 3899392.

The TEST3

In this case, we are performing the same test but with manual work area mode. According the TEST1, the database used HASH_AREA_SIZE as 7760702 and the same amount of memory is going to be used for that case also.

alter session set tracefile_identifier='HA_1';

alter session set hash_area_size=7760702;

alter session set workarea_size_policy=manual;

alter session set events '10053 trace name context forever';

alter session set events '10104 trace name context forever';

SELECT *

FROM t1, t2

WHERE t1.id = t2.id;

SQL_ID a8dmqqcbak2uu, child number 0

-------------------------------------

SELECT * FROM t1, t2 WHERE t1.id = t2.id

Plan hash value: 1838229974

--------------------------------------------------------------------------------------------------------------------------------------------

| Id | Operation | Name | Starts | E-Rows | A-Rows | A-Time | Buffers | Reads | Writes | OMem | 1Mem | Used-Mem | Used-Tmp|

--------------------------------------------------------------------------------------------------------------------------------------------

| 0 | SELECT STATEMENT | | 1 | | 20999 |00:00:05.44 | 270K| 632K| 89110 | | | | |

|* 1 | HASH JOIN | | 1 | 30000 | 20999 |00:00:05.44 | 270K| 632K| 89110 | 244M| 14M| 7839K (8)| 1330M|

| 2 | TABLE ACCESS FULL| T1 | 1 | 30000 | 30000 |00:00:00.26 | 90004 | 60002 | 0 | | | | |

| 3 | TABLE ACCESS FULL| T2 | 1 | 60000 | 60000 |00:00:00.51 | 180K| 120K| 0 | | | | |

--------------------------------------------------------------------------------------------------------------------------------------------

Predicate Information (identified by operation id):

---------------------------------------------------

1 - access("T1"."ID"="T2"."ID")

HJ statistics:

kxhfInit(HJ[1]): enter

kxhfInit(HJ[1]): exit

*** RowSrcId: 1 HASH JOIN STATISTICS (INITIALIZATION) ***

Join Type: INNER join

Original hash-area size: 7429892

Memory for slot table: 7135232

Calculated overhead for partitions and row/slot managers: 294660

Hash-join fanout: 8

Number of partitions: 8

Number of slots: 13

Multiblock IO: 67

Block size(KB): 8

Cluster (slot) size(KB): 536

Minimum number of bytes per block: 8160

Bit vector memory allocation(KB): 256

Per partition bit vector length(KB): 32

Maximum possible row length: 8071

Estimated build size (KB): 229

Estimated Build Row Length (includes overhead): 8031

# Immutable Flags:

Evaluate Left Input Row Vector

Evaluate Right Input Row Vector

# Mutable Flags:

Not BUFFER(execution) output of the join for PQ

IO sync

kxhfSetPhase(HJ[1]): phase=BUILD

kxhfAddChunk(HJ[1]): add chunk 0 (sz=13) to slot table

kxhfAddChunk(HJ[1]): chunk 0 (lbs=0x7fed50509270, slotTab=0x7fed50509138) added

kxhfWrite(HJ[1]): hash-join is spilling to disk

*** 2020-05-18T08:34:56.297588-04:00

kxhfSetPhase(HJ[1]): phase=PROBE_1

qerhnFetch(HJ[1]): max build row length (mbl=8023)

*** RowSrcId: 1 END OF BUILD (PHASE 1) ***

Revised row length: 8023

Revised build size: 235036KB

*** RowSrcId: 1 HASH JOIN GET FLUSHED PARTITIONS (PHASE 2) ***

Getting a pair of flushed partitions.

BUILD PARTITION: nrows:3727 size=(55 slots, 29480K)

PROBE PARTITION: nrows:7519 size=(111 slots, 59496K)

*** RowSrcId: 1 HASH JOIN BUILD HASH TABLE (PHASE 2) ***

Number of blocks that may be used to build the hash table 536:

Number of rows left to be iterated over (start of function): 3727

Number of rows iterated over this function call: 545

Number of rows left to be iterated over (end of function): 3182

### Hash table ###

.........

kxhfResetIter(HJ[1]): 0x7fed507e8fd0

qerhnFetchPhase2(HJ[1]): building a hash table

*** RowSrcId: 1 HASH JOIN GET FLUSHED PARTITIONS (PHASE 2) ***

Getting a pair of flushed partitions.

BUILD PARTITION: nrows:3840 size=(57 slots, 30552K)

PROBE PARTITION: nrows:7639 size=(113 slots, 60568K)

*** RowSrcId: 1 HASH JOIN BUILD HASH TABLE (PHASE 2) ***

Number of blocks that may be used to build the hash table 536:

Number of rows left to be iterated over (start of function): 3840

Number of rows iterated over this function call: 545

Number of rows left to be iterated over (end of function): 3295

The performance of the TEST3 is much better than TEST1. Although the TEST3 was done in multi-pass (8) mode, it gave us ~2 times better response time. As execution plans tell us the TEST3 did lots of reads (due to 8 passes for probe table) than TEST1 (write requests is comparable) but time spent is very less. Of course, cluster size influenced to get that result. In TEST1 small cluster size and large number of partitions were used, but in TEST3 large cluster size but small number of partitions were used, that is why response time reduced.

Before continuing thinking lets have a quick look at the SQL trace files:

For TEST1:

SELECT *

FROM t1, t2

WHERE t1.id = t2.id

call count cpu elapsed disk query current rows

------- ------ -------- ---------- ---------- ---------- ---------- ----------

Parse 1 0.00 0.00 0 0 0 0

Execute 1 0.00 0.00 0 0 0 0

Fetch 1401 2.53 10.26 269423 270009 0 20999

------- ------ -------- ---------- ---------- ---------- ---------- ----------

total 1403 2.53 10.27 269423 270009 0 20999

Misses in library cache during parse: 1

Optimizer mode: ALL_ROWS

Parsing user id: 106

Number of plan statistics captured: 1

Rows (1st) Rows (avg) Rows (max) Row Source Operation

---------- ---------- ---------- ---------------------------------------------------

20999 20999 20999 HASH JOIN (cr=270065 pr=269434 pw=89418 time=10231586 us starts=1 cost=81831 size=480930000 card=30000)

30000 30000 30000 TABLE ACCESS FULL T1 (cr=90004 pr=60002 pw=0 time=291160 us starts=1 cost=10474 size=240330000 card=30000)

60000 60000 60000 TABLE ACCESS FULL T2 (cr=180005 pr=120003 pw=0 time=510333 us starts=1 cost=20929 size=481200000 card=60000)

Elapsed times include waiting on following events:

Event waited on Times Max. Wait Total Waited

---------------------------------------- Waited ---------- ------------

SQL*Net message to client 1401 0.00 0.00

db file sequential read 5 0.00 0.00

PGA memory operation 72 0.00 0.00

direct path read 1428 0.00 0.36

direct path write temp 12774 0.01 8.41

direct path read temp 12774 0.00 0.11

SQL*Net more data to client 4 0.00 0.00

SQL*Net message from client 1401 0.00 0.18

********************************************************************************

For TEST3:

SELECT *

FROM t1, t2

WHERE t1.id = t2.id

call count cpu elapsed disk query current rows

------- ------ -------- ---------- ---------- ---------- ---------- ----------

Parse 1 0.00 0.00 0 0 0 0

Execute 1 0.00 0.00 0 0 0 0

Fetch 1401 2.50 5.49 632858 270009 0 20999

------- ------ -------- ---------- ---------- ---------- ---------- ----------

total 1403 2.50 5.49 632858 270009 0 20999

Misses in library cache during parse: 1

Optimizer mode: ALL_ROWS

Parsing user id: 106

Number of plan statistics captured: 1

Rows (1st) Rows (avg) Rows (max) Row Source Operation

---------- ---------- ---------- ---------------------------------------------------

20999 20999 20999 HASH JOIN (cr=270009 pr=632858 pw=89110 time=5443216 us starts=1 cost=75687 size=480930000 card=30000)

30000 30000 30000 TABLE ACCESS FULL T1 (cr=90004 pr=60002 pw=0 time=264528 us starts=1 cost=10474 size=240330000 card=30000)

60000 60000 60000 TABLE ACCESS FULL T2 (cr=180005 pr=120003 pw=0 time=514099 us starts=1 cost=20929 size=481200000 card=60000)

Elapsed times include waiting on following events:

Event waited on Times Max. Wait Total Waited

---------------------------------------- Waited ---------- ------------

SQL*Net message to client 1401 0.00 0.00

Disk file operations I/O 2 0.00 0.00

db file sequential read 5 0.00 0.00

PGA memory operation 17 0.00 0.00

direct path read 1428 0.00 0.41

direct path write temp 1330 0.02 3.61

direct path read temp 6759 0.00 0.36

SQL*Net more data to client 4 0.00 0.00

SQL*Net message from client 1401 0.00 0.18

********************************************************************************

As you see, problem is not related to (re)reading of the data (rereading probe table), in contrast it is related to writing corresponding partition data to their partitions on the temporary tablespace.

From the SQL trace files it is easily could be seen how the process was done (how the events appeared in sequence)

For TEST1:

-- writing build partitions to the disk in the build phase

WAIT #140201128746576: nam='PGA memory operation' ela= 7 p1=262144 p2=1 p3=0 obj#=79274 tim=105618054693

kxhfWrite(HJ[1]): hash-join is spilling to disk

WAIT #140201128746576: nam='direct path write temp' ela= 4189 file number=201 first dba=65408 block cnt=7 obj#=79274 tim=105618059045

WAIT #140201128746576: nam='direct path write temp' ela= 605 file number=201 first dba=65422 block cnt=7 obj#=79274 tim=105618059915

WAIT #140201128746576: nam='direct path write temp' ela= 410 file number=201 first dba=65436 block cnt=7 obj#=79274 tim=105618060373

WAIT #140201128746576: nam='direct path read' ela= 118 file number=7 first dba=1410 block cnt=126 obj#=79274 tim=105618060605

WAIT #140201128746576: nam='direct path write temp' ela= 433 file number=201 first dba=65450 block cnt=7 obj#=79274 tim=105618061192

WAIT #140201128746576: nam='direct path write temp' ela= 432 file number=201 first dba=65464 block cnt=7 obj#=79274 tim=105618061697

WAIT #140201128746576: nam='direct path write temp' ela= 421 file number=201 first dba=65478 block cnt=7 obj#=79274 tim=105618062212

WAIT #140201128746576: nam='direct path write temp' ela= 416 file number=201 first dba=65492 block cnt=7 obj#=79274 tim=105618062749

WAIT #140201128746576: nam='direct path write temp' ela= 428 file number=201 first dba=65506 block cnt=7 obj#=79274 tim=105618063245

WAIT #140201128746576: nam='direct path write temp' ela= 417 file number=201 first dba=65520 block cnt=7 obj#=79274 tim=105618063806

WAIT #140201128746576: nam='direct path write temp' ela= 760 file number=201 first dba=68096 block cnt=7 obj#=79274 tim=105618064613

WAIT #140201128746576: nam='direct path write temp' ela= 439 file number=201 first dba=68110 block cnt=7 obj#=79274 tim=105618065146

WAIT #140201128746576: nam='direct path read' ela= 424 file number=7 first dba=1538 block cnt=126 obj#=79274 tim=105618065604

WAIT #140201128746576: nam='direct path write temp' ela= 3772 file number=201 first dba=65429 block cnt=7 obj#=79274 tim=105618069473

WAIT #140201128746576: nam='direct path write temp' ela= 416 file number=201 first dba=68131 block cnt=7 obj#=79274 tim=105618070027

WAIT #140201128746576: nam='direct path write temp' ela= 458 file number=201 first dba=68145 block cnt=7 obj#=79274 tim=105618070523

WAIT #140201128746576: nam='direct path write temp' ela= 444 file number=201 first dba=68159 block cnt=7 obj#=79274 tim=105618071097

WAIT #140201128746576: nam='direct path write temp' ela= 787 file number=201 first dba=68173 block cnt=7 obj#=79274 tim=105618071946

WAIT #140201128746576: nam='direct path write temp' ela= 503 file number=201 first dba=68187 block cnt=7 obj#=79274 tim=105618072529

WAIT #140201128746576: nam='direct path write temp' ela= 435 file number=201 first dba=68201 block cnt=7 obj#=79274 tim=105618073058

WAIT #140201128746576: nam='direct path read' ela= 120 file number=7 first dba=1666 block cnt=126 obj#=79274 tim=105618073258

.....

-- writing probe partitions to the disk in the parttioning phase

qerhnProbeChooseRowP (rwsid=1): inmem=0,mm=0,nf=0

WAIT #140201128746576: nam='direct path read' ela= 15 file number=7 first dba=60577 block cnt=15 obj#=79276 tim=105621371741

WAIT #140201128746576: nam='direct path read' ela= 14 file number=7 first dba=60593 block cnt=15 obj#=79276 tim=105621371820

WAIT #140201128746576: nam='PGA memory operation' ela= 5 p1=262144 p2=1 p3=0 obj#=79276 tim=105621371877

WAIT #140201128746576: nam='direct path read' ela= 14 file number=7 first dba=60609 block cnt=15 obj#=79276 tim=105621371939

WAIT #140201128746576: nam='PGA memory operation' ela= 3 p1=262144 p2=1 p3=0 obj#=79276 tim=105621371957

WAIT #140201128746576: nam='PGA memory operation' ela= 4 p1=262144 p2=1 p3=0 obj#=79276 tim=105621372055

WAIT #140201128746576: nam='direct path read' ela= 15 file number=7 first dba=60625 block cnt=15 obj#=79276 tim=105621372095

WAIT #140201128746576: nam='PGA memory operation' ela= 3 p1=262144 p2=1 p3=0 obj#=79276 tim=105621372144

WAIT #140201128746576: nam='PGA memory operation' ela= 2 p1=262144 p2=1 p3=0 obj#=79276 tim=105621372197

WAIT #140201128746576: nam='direct path read' ela= 14 file number=7 first dba=60641 block cnt=15 obj#=79276 tim=105621372229

WAIT #140201128746576: nam='PGA memory operation' ela= 3 p1=262144 p2=1 p3=0 obj#=79276 tim=105621372290

WAIT #140201128746576: nam='direct path read' ela= 14 file number=7 first dba=60657 block cnt=15 obj#=79276 tim=105621372328

WAIT #140201128746576: nam='PGA memory operation' ela= 3 p1=262144 p2=1 p3=0 obj#=79276 tim=105621372401

WAIT #140201128746576: nam='direct path read' ela= 1996 file number=7 first dba=60674 block cnt=126 obj#=79276 tim=105621374414

WAIT #140201128746576: nam='PGA memory operation' ela= 5 p1=262144 p2=1 p3=0 obj#=79276 tim=105621374513

WAIT #140201128746576: nam='direct path read' ela= 789 file number=7 first dba=60802 block cnt=126 obj#=79276 tim=105621375334

WAIT #140201128746576: nam='PGA memory operation' ela= 5 p1=262144 p2=1 p3=0 obj#=79276 tim=105621375421

WAIT #140201128746576: nam='PGA memory operation' ela= 3 p1=262144 p2=1 p3=0 obj#=79276 tim=105621375501

WAIT #140201128746576: nam='PGA memory operation' ela= 1 p1=262144 p2=1 p3=0 obj#=79276 tim=105621375573

WAIT #140201128746576: nam='PGA memory operation' ela= 2 p1=262144 p2=1 p3=0 obj#=79276 tim=105621375649

WAIT #140201128746576: nam='direct path read' ela= 402 file number=7 first dba=60930 block cnt=126 obj#=79276 tim=105621376707

WAIT #140201128746576: nam='direct path read' ela= 85 file number=7 first dba=61058 block cnt=126 obj#=79276 tim=105621377463

WAIT #140201128746576: nam='direct path read' ela= 922 file number=7 first dba=61186 block cnt=126 obj#=79276 tim=105621378868

WAIT #140201128746576: nam='direct path read' ela= 82 file number=7 first dba=61314 block cnt=126 obj#=79276 tim=105621379505

WAIT #140201128746576: nam='direct path read' ela= 857 file number=7 first dba=61442 block cnt=126 obj#=79276 tim=105621380924

WAIT #140201128746576: nam='direct path write temp' ela= 3811 file number=201 first dba=100571 block cnt=7 obj#=79276 tim=105621385152

WAIT #140201128746576: nam='direct path write temp' ela= 439 file number=201 first dba=100585 block cnt=7 obj#=79276 tim=105621385712

WAIT #140201128746576: nam='direct path read' ela= 109 file number=7 first dba=61570 block cnt=126 obj#=79276 tim=105621385865

WAIT #140201128746576: nam='direct path write temp' ela= 741 file number=201 first dba=100599 block cnt=7 obj#=79276 tim=105621386954

WAIT #140201128746576: nam='direct path write temp' ela= 403 file number=201 first dba=104199 block cnt=7 obj#=79276 tim=105621387473

WAIT #140201128746576: nam='direct path read' ela= 365 file number=7 first dba=61698 block cnt=126 obj#=79276 tim=105621387866

WAIT #140201128746576: nam='direct path write temp' ela= 2993 file number=201 first dba=104213 block cnt=7 obj#=79276 tim=105621390923

WAIT #140201128746576: nam='direct path write temp' ela= 396 file number=201 first dba=104227 block cnt=7 obj#=79276 tim=105621391431

WAIT #140201128746576: nam='direct path write temp' ela= 428 file number=201 first dba=104241 block cnt=7 obj#=79276 tim=105621391938

WAIT #140201128746576: nam='direct path write temp' ela= 385 file number=201 first dba=104255 block cnt=7 obj#=79276 tim=105621392361

WAIT #140201128746576: nam='direct path write temp' ela= 434 file number=201 first dba=104269 block cnt=7 obj#=79276 tim=105621392869

WAIT #140201128746576: nam='direct path write temp' ela= 836 file number=201 first dba=104283 block cnt=7 obj#=79276 tim=105621393742

WAIT #140201128746576: nam='direct path write temp' ela= 396 file number=201 first dba=104297 block cnt=7 obj#=79276 tim=105621394223

WAIT #140201128746576: nam='direct path write temp' ela= 396 file number=201 first dba=104311 block cnt=7 obj#=79276 tim=105621394727

WAIT #140201128746576: nam='direct path write temp' ela= 386 file number=201 first dba=107783 block cnt=7 obj#=79276 tim=105621395223

WAIT #140201128746576: nam='direct path read' ela= 103 file number=7 first dba=61826 block cnt=126 obj#=79276 tim=105621395391

For TEST3

-- writing build partitions to the disk in the build phase

kxhfWrite(HJ[1]): hash-join is spilling to disk

WAIT #139718328414072: nam='Disk file operations I/O' ela= 17 FileOperation=2 fileno=201 filetype=6 obj#=79274 tim=107049866623

WAIT #139718328414072: nam='direct path write temp' ela= 3020 file number=201 first dba=52992 block cnt=67 obj#=79274 tim=107049869662

WAIT #139718328414072: nam='direct path read' ela= 130 file number=7 first dba=1410 block cnt=126 obj#=79274 tim=107049870022

WAIT #139718328414072: nam='direct path write temp' ela= 1596 file number=201 first dba=60288 block cnt=67 obj#=79274 tim=107049871744

WAIT #139718328414072: nam='direct path write temp' ela= 1440 file number=201 first dba=115968 block cnt=67 obj#=79274 tim=107049873676

WAIT #139718328414072: nam='direct path read' ela= 401 file number=7 first dba=1538 block cnt=126 obj#=79274 tim=107049874260

WAIT #139718328414072: nam='direct path write temp' ela= 3495 file number=201 first dba=46464 block cnt=67 obj#=79274 tim=107049878141

WAIT #139718328414072: nam='direct path read' ela= 117 file number=7 first dba=1666 block cnt=126 obj#=79274 tim=107049878477

WAIT #139718328414072: nam='direct path read' ela= 395 file number=7 first dba=1794 block cnt=126 obj#=79274 tim=107049879366

WAIT #139718328414072: nam='direct path read' ela= 101 file number=7 first dba=1922 block cnt=126 obj#=79274 tim=107049879897

WAIT #139718328414072: nam='direct path read' ela= 608 file number=7 first dba=2050 block cnt=126 obj#=79274 tim=107049880970

WAIT #139718328414072: nam='direct path read' ela= 105 file number=7 first dba=2178 block cnt=126 obj#=79274 tim=107049881641

WAIT #139718328414072: nam='direct path write temp' ela= 3701 file number=201 first dba=50560 block cnt=67 obj#=79274 tim=107049885625

WAIT #139718328414072: nam='direct path read' ela= 430 file number=7 first dba=2306 block cnt=126 obj#=79274 tim=107049886431

WAIT #139718328414072: nam='direct path write temp' ela= 4135 file number=201 first dba=56192 block cnt=67 obj#=79274 tim=107049890712

WAIT #139718328414072: nam='direct path write temp' ela= 1642 file number=201 first dba=302464 block cnt=67 obj#=79274 tim=107049892638

WAIT #139718328414072: nam='direct path read' ela= 113 file number=7 first dba=2434 block cnt=126 obj#=79274 tim=107049893046

WAIT #139718328414072: nam='direct path write temp' ela= 1705 file number=201 first dba=345472 block cnt=67 obj#=79274 tim=107049894940

WAIT #139718328414072: nam='direct path write temp' ela= 2164 file number=201 first dba=347520 block cnt=67 obj#=79274 tim=107049897494

WAIT #139718328414072: nam='direct path write temp' ela= 1802 file number=201 first dba=48640 block cnt=67 obj#=79274 tim=107049899455

WAIT #139718328414072: nam='direct path read' ela= 382 file number=7 first dba=2562 block cnt=126 obj#=79274 tim=107049899940

WAIT #139718328414072: nam='direct path write temp' ela= 3674 file number=201 first dba=130688 block cnt=67 obj#=79274 tim=107049903779

WAIT #139718328414072: nam='direct path read' ela= 132 file number=7 first dba=2690 block cnt=126 obj#=79274 tim=107049904267

WAIT #139718328414072: nam='direct path read' ela= 393 file number=7 first dba=2818 block cnt=126 obj#=79274 tim=107049905009

WAIT #139718328414072: nam='direct path read' ela= 102 file number=7 first dba=2946 block cnt=126 obj#=79274 tim=107049905460

WAIT #139718328414072: nam='direct path write temp' ela= 3827 file number=201 first dba=102272 block cnt=67 obj#=79274 tim=107049909502

WAIT #139718328414072: nam='direct path read' ela= 423 file number=7 first dba=3074 block cnt=126 obj#=79274 tim=107049910232

WAIT #139718328414072: nam='direct path read' ela= 104 file number=7 first dba=3202 block cnt=126 obj#=79274 tim=107049910697

WAIT #139718328414072: nam='direct path write temp' ela= 3154 file number=201 first dba=80000 block cnt=67 obj#=79274 tim=107049913967

WAIT #139718328414072: nam='direct path read' ela= 367 file number=7 first dba=3330 block cnt=126 obj#=79274 tim=107049914727

WAIT #139718328414072: nam='direct path write temp' ela= 4121 file number=201 first dba=128640 block cnt=67 obj#=79274 tim=107049919272

WAIT #139718328414072: nam='direct path read' ela= 134 file number=7 first dba=3458 block cnt=126 obj#=79274 tim=107049919497

...

-- writing probe partitions to the disk in the parttioning phase

qerhnProbeChooseRowP (rwsid=1): inmem=0,mm=0,nf=0

WAIT #139718328414072: nam='direct path read' ela= 12 file number=7 first dba=60577 block cnt=15 obj#=79276 tim=107051448147

WAIT #139718328414072: nam='direct path read' ela= 13 file number=7 first dba=60593 block cnt=15 obj#=79276 tim=107051448269

WAIT #139718328414072: nam='direct path read' ela= 12 file number=7 first dba=60609 block cnt=15 obj#=79276 tim=107051448400

WAIT #139718328414072: nam='direct path read' ela= 12 file number=7 first dba=60625 block cnt=15 obj#=79276 tim=107051448457

WAIT #139718328414072: nam='direct path read' ela= 11 file number=7 first dba=60641 block cnt=15 obj#=79276 tim=107051448523

WAIT #139718328414072: nam='direct path read' ela= 12 file number=7 first dba=60657 block cnt=15 obj#=79276 tim=107051448581

WAIT #139718328414072: nam='direct path read' ela= 1942 file number=7 first dba=60674 block cnt=126 obj#=79276 tim=107051450573

WAIT #139718328414072: nam='direct path read' ela= 742 file number=7 first dba=60802 block cnt=126 obj#=79276 tim=107051451384

WAIT #139718328414072: nam='direct path read' ela= 1449 file number=7 first dba=60930 block cnt=126 obj#=79276 tim=107051453208

WAIT #139718328414072: nam='direct path read' ela= 115 file number=7 first dba=61058 block cnt=126 obj#=79276 tim=107051453698

WAIT #139718328414072: nam='direct path read' ela= 1077 file number=7 first dba=61186 block cnt=126 obj#=79276 tim=107051455171

WAIT #139718328414072: nam='direct path read' ela= 306 file number=7 first dba=61314 block cnt=126 obj#=79276 tim=107051455845

WAIT #139718328414072: nam='direct path read' ela= 992 file number=7 first dba=61442 block cnt=126 obj#=79276 tim=107051457246

WAIT #139718328414072: nam='direct path write temp' ela= 5045 file number=201 first dba=198144 block cnt=67 obj#=79276 tim=107051462570

WAIT #139718328414072: nam='direct path read' ela= 134 file number=7 first dba=61570 block cnt=126 obj#=79276 tim=107051462975

WAIT #139718328414072: nam='direct path write temp' ela= 1591 file number=201 first dba=193664 block cnt=67 obj#=79276 tim=107051465002

WAIT #139718328414072: nam='direct path read' ela= 356 file number=7 first dba=61698 block cnt=126 obj#=79276 tim=107051465458

WAIT #139718328414072: nam='direct path write temp' ela= 3392 file number=201 first dba=189568 block cnt=67 obj#=79276 tim=107051469260

WAIT #139718328414072: nam='direct path read' ela= 111 file number=7 first dba=61826 block cnt=126 obj#=79276 tim=107051469503

WAIT #139718328414072: nam='direct path write temp' ela= 1699 file number=201 first dba=185600 block cnt=67 obj#=79276 tim=107051471362

WAIT #139718328414072: nam='direct path write temp' ela= 1534 file number=201 first dba=180992 block cnt=67 obj#=79276 tim=107051473045

WAIT #139718328414072: nam='direct path write temp' ela= 1866 file number=201 first dba=177280 block cnt=67 obj#=79276 tim=107051475071

WAIT #139718328414072: nam='direct path write temp' ela= 1486 file number=201 first dba=173824 block cnt=67 obj#=79276 tim=107051476803

WAIT #139718328414072: nam='direct path read' ela= 349 file number=7 first dba=61954 block cnt=126 obj#=79276 tim=107051477330

WAIT #139718328414072: nam='direct path write temp' ela= 3576 file number=201 first dba=169856 block cnt=67 obj#=79276 tim=107051481211

WAIT #139718328414072: nam='direct path read' ela= 108 file number=7 first dba=62082 block cnt=126 obj#=79276 tim=107051481564

WAIT #139718328414072: nam='direct path read' ela= 371 file number=7 first dba=62210 block cnt=126 obj#=79276 tim=107051482291

WAIT #139718328414072: nam='direct path read' ela= 95 file number=7 first dba=62338 block cnt=126 obj#=79276 tim=107051482745

WAIT #139718328414072: nam='direct path read' ela= 650 file number=7 first dba=62466 block cnt=126 obj#=79276 tim=107051483723

WAIT #139718328414072: nam='direct path read' ela= 254 file number=7 first dba=62594 block cnt=126 obj#=79276 tim=107051484330

WAIT #139718328414072: nam='direct path write temp' ela= 5174 file number=201 first dba=191616 block cnt=67 obj#=79276 tim=107051489630

WAIT #139718328414072: nam='direct path write temp' ela= 1524 file number=201 first dba=196224 block cnt=67 obj#=79276 tim=107051491352

qerhnUpdateFilterStats(HJ[1]): Disabled bitmap filtering...

The smaller cluster size caused large number of small partitions, we had 128 partitions and each of them at times in memory had almost one slot (in total we had 132 slots). It means, when reading data from the tables (build and probe) with multi block read (as trace file says 126 blocks) was distributed 128 partitions and these blocks filled quickly then these slots had to be written to disk (each of them to separate disk partitions) very often. The DB performed lots of I/O requests with small chunks (7 blocks) and it took time. In this case even the platform supports asynchronous I/O, it is impossible to perform writing asynchronously, because at least 2 slots are required to do that. Same thing is true for TEST3 also. As we have pointed out for the TEST1 the number of partitions could have been selected as 64 blocks instead of 128 and cluster size would be 14 for the improvement.

If you noticed, even TEST2 is better than TEST1, despite of two-pass join. Because the size of the partitions is big and it restricted to perform lots of small write requests to the disk at certain percent.

The TEST1 was true one-pass on disk HJ. So, to get larger cluster size and reduce number of partitions properly as per (F.3) we should increase the memory.

For example if you set pga_aggregate_target=200M and repeat the TEST1 again then:

Join Type: INNER join

Original hash-area size: 14346840

Memory for slot table: 12189696

Calculated overhead for partitions and row/slot managers: 2157144

Hash-join fanout: 32

Number of partitions: 32

Number of slots: 48

Multiblock IO: 31

Block size(KB): 8

Cluster (slot) size(KB): 248

Minimum number of bytes per block: 8160

Bit vector memory allocation(KB): 2048

Per partition bit vector length(KB): 64

Maximum possible row length: 8071

Estimated build size (KB): 229

Estimated Build Row Length (includes overhead): 8031

# Immutable Flags:

Evaluate Left Input Row Vector

Evaluate Right Input Row Vector

# Mutable Flags:

Not BUFFER(execution) output of the join for PQ

IO sync

kxhfSetPhase(HJ[1]): phase=BUILD

kxhfAddChunk(HJ[1]): add chunk 0 (sz=64) to slot table

kxhfAddChunk(HJ[1]): chunk 0 (lbs=0x7f938e7037e0, slotTab=0x7f938e703a00) added

kxhfWrite(HJ[1]): hash-join is spilling to disk

*** 2020-05-23T02:52:36.290573-04:00

kxhfSetPhase(HJ[1]): phase=PROBE_1

qerhnFetch(HJ[1]): max build row length (mbl=8023)

*** RowSrcId: 1 END OF BUILD (PHASE 1) ***

Revised row length: 8023

Revised build size: 235036KB

SQL_ID a8dmqqcbak2uu, child number 0

-------------------------------------

SELECT * FROM t1, t2 WHERE t1.id = t2.id

Plan hash value: 1838229974

--------------------------------------------------------------------------------------------------------------------------------------------

| Id | Operation | Name | Starts | E-Rows | A-Rows | A-Time | Buffers | Reads | Writes | OMem | 1Mem | Used-Mem | Used-Tmp|

--------------------------------------------------------------------------------------------------------------------------------------------

| 0 | SELECT STATEMENT | | 1 | | 20999 |00:00:06.31 | 270K| 269K| 89559 | | | | |

|* 1 | HASH JOIN | | 1 | 30000 | 20999 |00:00:06.31 | 270K| 269K| 89559 | 244M| 14M| 14M (1)| 723M|

| 2 | TABLE ACCESS FULL| T1 | 1 | 30000 | 30000 |00:00:00.38 | 90004 | 60002 | 0 | | | | |

| 3 | TABLE ACCESS FULL| T2 | 1 | 60000 | 60000 |00:00:00.64 | 180K| 120K| 0 | | | | |

--------------------------------------------------------------------------------------------------------------------------------------------

Predicate Information (identified by operation id):

---------------------------------------------------

1 - access("T1"."ID"="T2"."ID")

It is still one-pass and on disk HJ, but with larger cluster size, so small number of larger partitions. None of partitions were kept in the memory but performance improved significantly.

The TEST4

alter system set pga_aggregate_target=600M;

alter session set tracefile_identifier='PGA_600M';

alter session set events '10104 trace name context forever';

SQL_ID a8dmqqcbak2uu, child number 0

-------------------------------------

SELECT * FROM t1, t2 WHERE t1.id = t2.id

Plan hash value: 1838229974

--------------------------------------------------------------------------------------------------------------------------------------------

| Id | Operation | Name | Starts | E-Rows | A-Rows | A-Time | Buffers | Reads | Writes | OMem | 1Mem | Used-Mem | Used-Tmp|

--------------------------------------------------------------------------------------------------------------------------------------------

| 0 | SELECT STATEMENT | | 1 | | 20999 |00:00:05.65 | 270K| 246K| 66526 | | | | |

|* 1 | HASH JOIN | | 1 | 30000 | 20999 |00:00:05.65 | 270K| 246K| 66526 | 244M| 14M| 106M (1)| 540M|

| 2 | TABLE ACCESS FULL| T1 | 1 | 30000 | 30000 |00:00:00.30 | 90004 | 60002 | 0 | | | | |

| 3 | TABLE ACCESS FULL| T2 | 1 | 60000 | 60000 |00:00:00.56 | 180K| 120K| 0 | | | | |

--------------------------------------------------------------------------------------------------------------------------------------------

Predicate Information (identified by operation id):

---------------------------------------------------

1 - access("T1"."ID"="T2"."ID")

SELECT *

FROM t1, t2

WHERE t1.id = t2.id

call count cpu elapsed disk query current rows

------- ------ -------- ---------- ---------- ---------- ---------- ----------

Parse 1 0.00 0.00 0 0 0 0

Execute 1 0.00 0.00 0 0 0 0

Fetch 1401 1.78 5.69 246531 270533 0 20999

------- ------ -------- ---------- ---------- ---------- ---------- ----------

total 1403 1.79 5.70 246531 270533 0 20999

Misses in library cache during parse: 1

Optimizer mode: ALL_ROWS

Parsing user id: 106

Number of plan statistics captured: 1

Rows (1st) Rows (avg) Rows (max) Row Source Operation

---------- ---------- ---------- ---------------------------------------------------

20999 20999 20999 HASH JOIN (cr=270589 pr=246542 pw=66526 time=5650978 us starts=1 cost=65570 size=480930000 card=30000)

30000 30000 30000 TABLE ACCESS FULL T1 (cr=90004 pr=60002 pw=0 time=300684 us starts=1 cost=10474 size=240330000 card=30000)

60000 60000 60000 TABLE ACCESS FULL T2 (cr=180529 pr=120003 pw=0 time=561738 us starts=1 cost=20929 size=481200000 card=60000)

Elapsed times include waiting on following events:

Event waited on Times Max. Wait Total Waited

---------------------------------------- Waited ---------- ------------

SQL*Net message to client 1401 0.00 0.00

PGA memory operation 202 0.00 0.00

Disk file operations I/O 2 0.00 0.00

db file sequential read 5 0.00 0.00

direct path read 1428 0.01 0.42

asynch descriptor resize 9 0.00 0.00

direct path write temp 2146 0.01 4.00

direct path read temp 2146 0.00 0.10

SQL*Net more data to client 4 0.00 0.00

SQL*Net message from client 1401 0.00 0.20

********************************************************************************

This test was also done in one-pass mode, but we had 16M for HASH_AREA_SIZE and as it is seen in this case asynch I/O was possible. But still writing partitions to the disk is the dominant part of the process.

Join Type: INNER join

Original hash-area size: 16443992

Memory for slot table: 12189696

Calculated overhead for partitions and row/slot managers: 4254296

Hash-join fanout: 32

Number of partitions: 32

Number of slots: 48

Multiblock IO: 31

Block size(KB): 8

Cluster (slot) size(KB): 248

Minimum number of bytes per block: 8160

Bit vector memory allocation(KB): 4096

Per partition bit vector length(KB): 128

Maximum possible row length: 8071

Estimated build size (KB): 229

Estimated Build Row Length (includes overhead): 8031

# Immutable Flags:

Evaluate Left Input Row Vector

Evaluate Right Input Row Vector

# Mutable Flags:

Not BUFFER(execution) output of the join for PQ

IO sync

kxhfSetPhase(HJ[1]): phase=BUILD

…

kxhfSetPhase(HJ[1]): phase=PROBE_1

qerhnFetch(HJ[1]): max build row length (mbl=8023)

*** RowSrcId: 1 END OF BUILD (PHASE 1) ***

Revised row length: 8023

Revised build size: 235036KB

The number of partitions reduced and cluster size increased. According to the (F.4):

235036/(12189696/1024)<NP<12189696/1024/8/C => 19.7< NP < 1488/C => NP=32

--and then from (F.7) we get:

C < 12189696/1024/8/32 = 46.5.

Although it should have been possible to select cluster size as 40 cluster, but oracle selected it as 31. Also, quite enough memory allowed the database to keep some partitions completely in memory the end of build phase and gave the improvement.

*** RowSrcId: 1 HASH JOIN BUILD HASH TABLE (PHASE 1) ***

Total number of partitions: 32

Number of partitions left in memory: 12

Total number of rows in in-memory partitions: 11225

(used as preliminary number of buckets in hash table)

Estimated max # of build rows that can fit in avail memory: 49352

### Partition Distribution ###

Partition:0 rows:974 clusters:0 slots:0 kept=0

Partition:1 rows:994 clusters:0 slots:0 kept=0

Partition:2 rows:933 clusters:0 slots:0 kept=0

Partition:3 rows:902 clusters:0 slots:0 kept=0

Partition:4 rows:921 clusters:0 slots:0 kept=0

Partition:5 rows:917 clusters:0 slots:0 kept=0

Partition:6 rows:968 clusters:0 slots:0 kept=0

Partition:7 rows:921 clusters:0 slots:0 kept=0

Partition:8 rows:967 clusters:0 slots:0 kept=0

Partition:9 rows:961 clusters:0 slots:0 kept=0

Partition:10 rows:971 clusters:0 slots:0 kept=0

Partition:11 rows:941 clusters:0 slots:0 kept=0

Partition:12 rows:929 clusters:0 slots:0 kept=0

Partition:13 rows:970 clusters:0 slots:0 kept=0

Partition:14 rows:895 clusters:0 slots:0 kept=0

Partition:15 rows:927 clusters:0 slots:0 kept=0

Partition:16 rows:882 clusters:0 slots:0 kept=0

Partition:17 rows:932 clusters:0 slots:0 kept=0

Partition:18 rows:937 clusters:0 slots:0 kept=0

Partition:19 rows:933 clusters:0 slots:0 kept=0

Partition:20 rows:962 clusters:31 slots:1 kept=1

Partition:21 rows:989 clusters:32 slots:1 kept=1

Partition:22 rows:939 clusters:30 slots:1 kept=1

Partition:23 rows:909 clusters:29 slots:1 kept=1

Partition:24 rows:921 clusters:30 slots:1 kept=1

Partition:25 rows:933 clusters:30 slots:1 kept=1

Partition:26 rows:875 clusters:28 slots:1 kept=1

Partition:27 rows:957 clusters:31 slots:1 kept=1

Partition:28 rows:923 clusters:30 slots:1 kept=1

Partition:29 rows:926 clusters:30 slots:1 kept=1

Partition:30 rows:948 clusters:31 slots:3 kept=1

Partition:31 rows:943 clusters:30 slots:14 kept=1

*** (continued) HASH JOIN BUILD HASH TABLE (PHASE 1) ***

Revised number of hash buckets (after flushing): 11225

The TEST5

Of course, Oracle does not always follow our above assumption. This test we will see that high number of partitions are selected.

create table t4

tablespace users

as

select 10001+rownum as id

, trunc(sysdate)-trunc(level/1000) dat

, round(level/10,2) amount

, rpad('x', 10000) as f1

, rpad('y', 10000) as f2

, rpad('x', 10000) as f3

, rpad('y', 10000) as f4

, rpad('x', 10000) as f5

, rpad('y', 10000) as f6

from dual

connect by

level <= 6e4

;

create table t5 tablespace users parallel 2 as select * from t4 ;

alter table t5 parallel 1;

alter system set pga_aggregate_target=1g;

SQL_ID a1ajqys4at9sj, child number 0

-------------------------------------

SELECT * FROM t4, t5 WHERE t4.id = t5.id

Plan hash value: 3579674918

--------------------------------------------------------------------------------------------------------------------------------------------

| Id | Operation | Name | Starts | E-Rows | A-Rows | A-Time | Buffers | Reads | Writes | OMem | 1Mem | Used-Mem | Used-Tmp|

--------------------------------------------------------------------------------------------------------------------------------------------

| 0 | SELECT STATEMENT | | 1 | | 960K|00:03:46.81 | 3198K| 3022K| 1420K| | | | |

|* 1 | HASH JOIN | | 1 | 954K| 960K|00:03:46.81 | 3198K| 3022K| 1420K| 5692M| 56M| 103M (1)| 11G|

| 2 | TABLE ACCESS FULL| T4 | 1 | 240K| 240K|00:00:04.39 | 1599K| 800K| 0 | | | | |

| 3 | TABLE ACCESS FULL| T5 | 1 | 240K| 240K|00:00:04.50 | 1599K| 800K| 0 | | | | |

--------------------------------------------------------------------------------------------------------------------------------------------

Predicate Information (identified by operation id):

---------------------------------------------------

1 - access("T4"."ID"="T5"."ID")

*** RowSrcId: 1 HASH JOIN STATISTICS (INITIALIZATION) ***

Join Type: INNER join

Original hash-area size: 101918155

Memory for slot table: 97517568

Calculated overhead for partitions and row/slot managers: 4400587

Hash-join fanout: 256

Number of partitions: 256

Number of slots: 384

Multiblock IO: 31

Block size(KB): 8

Cluster (slot) size(KB): 248

Minimum number of bytes per block: 8160

Bit vector memory allocation(KB): 4096

Per partition bit vector length(KB): 16

Maximum possible row length: 24079

Estimated build size (KB): 5505

Estimated Build Row Length (includes overhead): 24054

# Immutable Flags:

Evaluate Left Input Row Vector

Evaluate Right Input Row Vector

# Mutable Flags:

Not BUFFER(execution) output of the join for PQ

IO sync

kxhfSetPhase(HJ[1]): phase=BUILD

WAIT #140460853627488: nam='Disk file operations I/O' ela= 19 FileOperation=2 fileno=7 filetype=2 obj#=79792 tim=1728278840

WAIT #140460853627488: nam='db file sequential read' ela= 451 file#=7 block#=181378 blocks=1 obj#=79792 tim=1728279303

....

kxhfSetPhase(HJ[1]): phase=PROBE_1

qerhnFetch(HJ[1]): max build row length (mbl=24041)

*** RowSrcId: 1 END OF BUILD (PHASE 1) ***

Revised row length: 24041

Revised build size: 5634579KB

********************************************************************************

SELECT *

FROM t4, t5

WHERE t4.id = t5.id

call count cpu elapsed disk query current rows

------- ------ -------- ---------- ---------- ---------- ---------- ----------

Parse 1 0.00 0.00 0 0 0 0

Execute 1 0.00 0.00 0 0 0 0

Fetch 64001 39.30 240.09 3022397 3198089 0 960000

------- ------ -------- ---------- ---------- ---------- ---------- ----------

total 64003 39.31 240.10 3022397 3198089 0 960000

Misses in library cache during parse: 1

Optimizer mode: ALL_ROWS

Parsing user id: 106

Number of plan statistics captured: 1

Rows (1st) Rows (avg) Rows (max) Row Source Operation

---------- ---------- ---------- ---------------------------------------------------

960000 960000 960000 HASH JOIN (cr=3198145 pr=3022407 pw=1420978 time=232736486 us starts=1 cost=980133 size=45875317488 card=954781)

240000 240000 240000 TABLE ACCESS FULL T4 (cr=1599058 pr=800668 pw=0 time=5110328 us starts=1 cost=217453 size=5765760000 card=240000)

240000 240000 240000 TABLE ACCESS FULL T5 (cr=1599031 pr=800751 pw=0 time=5080920 us starts=1 cost=217190 size=5765760000 card=240000)

Elapsed times include waiting on following events:

Event waited on Times Max. Wait Total Waited

---------------------------------------- Waited ---------- ------------

SQL*Net message to client 64001 0.00 0.05

PGA memory operation 252 0.00 0.00

Disk file operations I/O 2 0.00 0.00

db file sequential read 2360 0.02 0.15

direct path read 12528 0.01 4.16

asynch descriptor resize 3 0.00 0.00

direct path write temp 45838 0.06 178.93

direct path read temp 45838 0.03 26.99

SQL*Net more data to client 11 0.00 0.00

SQL*Net message from client 64001 0.02 28.75

********************************************************************************

NP could have been calculated as:

NP > 5634579/(101918155/1024)=56.61, so NP=64.

But high number of partitions caused small cluster size. if database decides to produce large number of small partitions then the NP should be large enough for keep some of them into the memory at the end of the build phase. In our last case none of 256 partitions was not stored in the memory, in contrast C was small and got inefficient i/o. if NP was selected as 64 then C would be big and i/o time would be reduced.

To improve one-pass HJ performance the minimum memory defined by (F.6) should be increased. And this increasing will cause two things:

1) If the increased memory not sufficiently big but it would allow Oracle to select bigger cluster size and small number of partitions and as a result HJ performance will be improved.

2) If the memory sufficiently increased, then oracle will be able to define optimal cluster size and number of partitions and some of them will be kept in the memory (the writing partitions to the disk part will be reduced), as a result significant improvement will be gain.

To increase memory the following could be considered (roughly):

MEM = SQRT(T1*C) + k*|Pi| = SQRT(T1*C) + k|T1|/NP

--If you want to keep k number of partitions in the memory

Or

MEM = SQRT(T1*C) +p*|T1|

--If you want to keep p percent of |T1| in the memory

Summary

The One-pass HJ is effective if there is enough memory to store some partitions in it. Multi-pass join might give better response time than pure One-pass join (which is on disk) in certain situation. Manual work area management is not good of course, it does not use available memory efficiently, but depending on conditions in some cases, it could be much efficient than pure one-pass auto join. Oracle tries to produce large number of partitions with small sizes in general. It is good if we have enough memory to keep some partitions in memory and reduce writing of full build/probe tables to the temporary segment. Otherwise as we see, it caused lots of small I/O requests to write data to the disk. Re-reading might not be the main problem of the HJ. Because in this case small number of but large partitions could be used to take advantage of I/O. We saw that the build/partition phase might be most dominant part of the HJ not the join phase itself.

Finally, number of partitions and cluster size are identified by runtime engine during the HJ, but available memory depends on activities in the DB (active work areas) so memory pressure plays important role during the HJ execution.

Useful materials:

[1] Lewis, Jonathan “Cost-Based Oracle Fundamentals”, 2006

[2] Prabhaker “GP” Gongloor, Sameer Patkar. Oracle Technical Report

“Hash Joins Implementation and Tuning Release 7.3” ACTA March 1997